Controllable Dynamic Appearance for Neural 3D Portraits

ShahRukh Athar, Zhixin Shu Zexiang Xu, Fujun Luan, Sai Bi, Kalyan Sunkavalli and Dimitris Samaras

TL;DR CoDyNeRF enables the realistic reproduction of illumination effects while animating Neural 3D Portraits.

Abstract

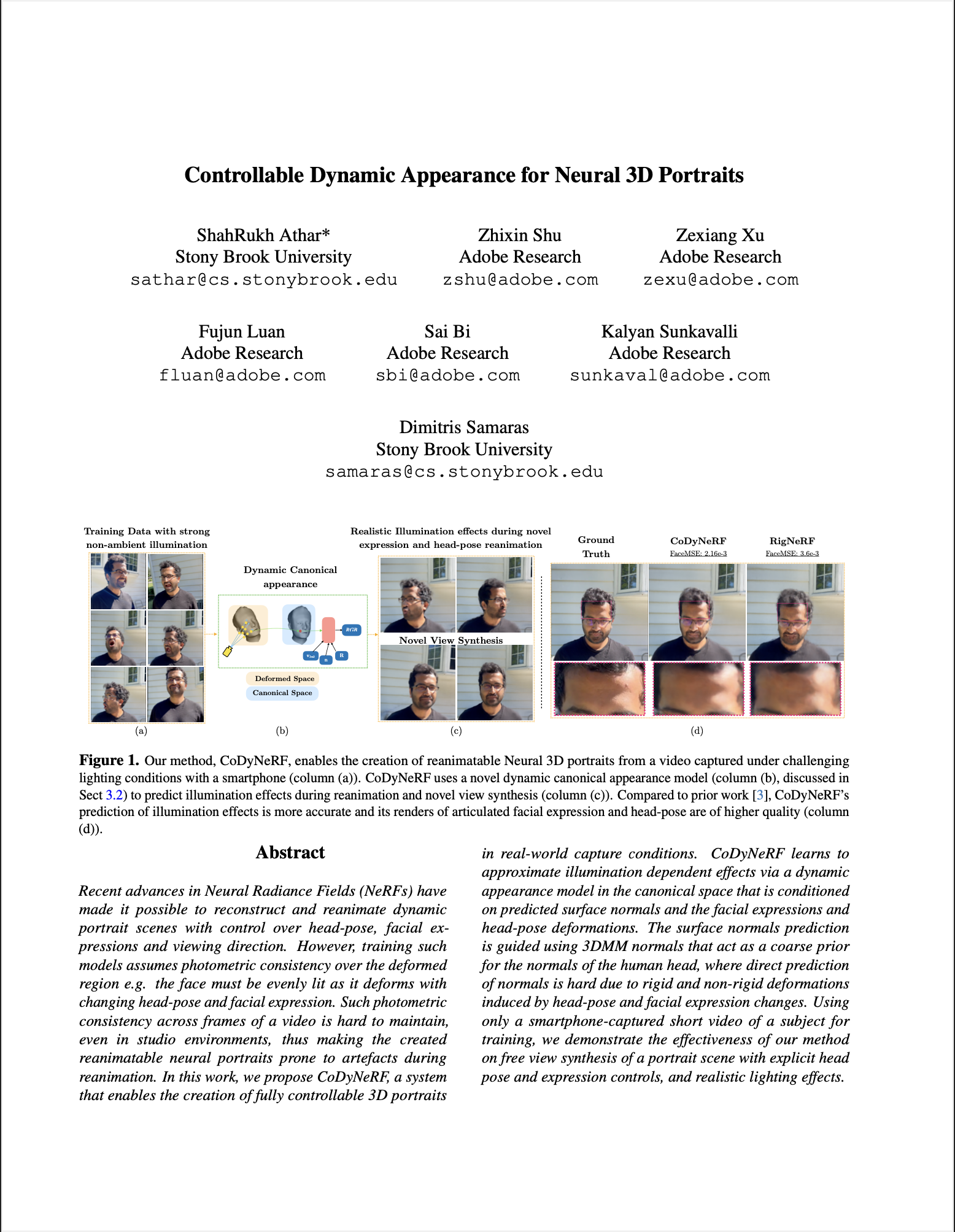

Recent advances in Neural Radiance Fields (NeRFs) have made it possible to reconstruct and reanimate dynamic portrait scenes with control over head-pose, facial expressions and viewing direction. However, training such models assumes photometric consistency over the deformed region e.g. the face must be evenly lit as it deforms with changing head-pose and facial expression. Such photometric consistency across frames of a video is hard to maintain, even in studio environments, thus making the created reanimatable neural portraits prone to artefacts during reanimation. In this work, we propose CoDyNeRF, a system that enables the creation of fully controllable 3D portraits in real-world capture conditions. CoDyNeRF learns to approximate illumination dependent effects via a dynamic appearance model in the canonical space that is conditioned on predicted surface normals and the facial expressions and head-pose deformations. The surface normals prediction is guided using 3DMM normals that act as a coarse prior for the normals of the human head, where direct prediction of normals is hard due to rigid and non-rigid deformations induced by head-pose and facial expression changes. Using only a smartphone-captured short video of a subject for training, we demonstrate the effectiveness of our method on free view synthesis of a portrait scene with explicit head pose and expression controls, and realistic lighting effects.

Some Results

Compared to prior work, CoDyNeRF is able to realistically reproduce specularities, cast shadows and shading

Below we show reanimation results across different subjects.

Citation

@inproceedings{athar2023codynerf,

title={Controllable Dynamic Appearance for Neural 3D Portraits},

author={Athar, ShahRukh and Shu, Zhixin and Xu, Zexiang and Luan, Fujun and Bi, Sai and Sunkavalli, Kalyan and Samaras, Dimitris},

journal={arXiv preprint arXiv:2309.11009},

year = {2023}

}